top of page

AI Integration in Everyday Software

Integrate LLMs into your software to automate tasks and generate intelligent insights. Enhance user interactions with advanced language capabilities.

Search

Vision Transformer in Python: Working, Architecture, and Code

Learn how Vision Transformers work in Python using PyTorch through a practical implementation on the EuroSAT dataset. Explore patch embeddings, positional encoding, self-attention mechanisms, transformer encoder architecture, attention visualizations, and real-world computer vision applications in modern AI systems.

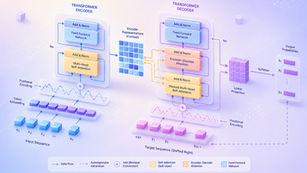

How Seq2Seq Transformers Work A Practical Perspective

A practical deep dive into Seq2Seq Transformers, covering their evolution from RNNs to attention-based architectures, core working principles, and mathematical foundations. This blog connects theory with real implementation clarity, helping readers understand how modern encoder–decoder models power tasks like translation, summarization, and generative AI.

Diffusion Models in Generative AI: Concepts, Process, and Applications

Diffusion models are transforming generative AI by learning how to convert random noise into highly detailed and realistic outputs. Widely used in modern image generation systems, these models follow a step-by-step denoising process that delivers superior quality and stability compared to traditional approaches like GANs and VAEs. This blog breaks down how diffusion models work, their core concepts, and why they are shaping the future of AI-driven content generation.

What is Q-Learning? Concepts, Formula, and Example

Q-learning is one of the most fundamental algorithms in reinforcement learning, enabling machines to learn optimal decisions through interaction and experience. By combining mathematical foundations like Markov Decision Processes and the Bellman equation, it transforms simple trial-and-error into intelligent behavior. This blog explores how Q-learning works, why it matters, and how it drives modern AI systems.

The Attention Mechanism: Foundations, Evolution, and Transformer Architecture

Attention mechanisms transformed deep learning by enabling models to focus on relevant information dynamically. This article traces their development and explains how they became the foundation of Transformer architectures.

What Is a Semantic AI Search Engine? A Practical Guide with Examples

Build a semantic AI search engine in Python that understands user intent using vector embeddings and similarity search. This guide explains how to store content in a vector database, run semantic queries, and retrieve highly relevant results based on meaning instead of exact keywords, making it ideal for modern AI-powered search applications.

Vector Databases with Chroma in Python: A Practical Guide

Learn how to build a practical vector database pipeline using Python and Chroma. This guide walks you through scraping website content, generating embeddings, and storing them in a Chroma vector database for semantic search and AI-powered retrieval.

Predictive Analytics with TensorFlow in Python: An End-to-End Guide

Predictive analytics with TensorFlow in Python enables you to turn historical data into accurate future predictions using scalable deep learning models. This guide walks through the full workflow—from data preparation and model training to evaluation and deployment—using practical, real-world examples.

Biometric Palm Recognition Using Vision Transformers in Python

This blog explores biometric palm recognition using Vision Transformers in Python. It covers the core computer vision concepts behind transformer-based feature learning and demonstrates how global visual representations can be applied to palm classification tasks.

Building Stateful AI Workflows with LangGraph in Python

Explore LangGraph in Python to orchestrate multi-step AI workflows using open-source models like Mistral-7B. Build stateful, auditable, and production-ready research agents for literature review, hypothesis generation, and experiment design.

Deep Learning with Transformers in Python

This guide offers a hands-on walkthrough of experimenting with Transformers in Python, covering model preparation, fine-tuning, evaluation, and attention visualization. Designed for researchers and practitioners, it bridges theoretical understanding with practical implementation using modern transformer architectures.

Advanced Prompt Engineering: Building Multi-Step, Context-Aware AI Workflows

Advanced prompt engineering transforms how AI systems reason and respond. This guide explores multi-step workflows, contextual memory, and reasoning chains that enable models like ChatGPT and Gemini to think and act more intelligently across complex tasks.

Functional Modes of Large Language Models (LLMs) – Explained with Gemini API Examples

Large Language Models (LLMs) have evolved beyond simple text generation into multi-functional systems capable of reasoning, coding, and executing structured actions. In this blog, we break down each functional mode of LLMs and illustrate them through Gemini API examples, showing how these capabilities combine to create dynamic and intelligent AI workflows.

Building a Context-Aware Conversational RAG Assistant with LangChain in Python

Learn how to build a fully functional conversational AI assistant using Google’s Gemini models and LangChain’s Retrieval-Augmented Generation (RAG) pipeline. This hands-on tutorial walks through API setup, data embedding from your website, query contextualization, and dynamic multi-turn conversations. By the end, you’ll have a context-aware assistant capable of retrieving domain-specific knowledge, remembering prior exchanges, and delivering natural, grounded responses.

A Complete Guide to LangChain for AI-Powered Application Development

Learn how LangChain helps developers build intelligent, modular, and context-aware AI applications using large language models. Explore its core components, setup process, use cases, and integration with tools like LangSmith, LangGraph, and Google Gemini.

bottom of page