top of page

AI Integration in Everyday Software

Integrate LLMs into your software to automate tasks and generate intelligent insights. Enhance user interactions with advanced language capabilities.

Search

What Is a Semantic AI Search Engine? A Practical Guide with Examples

Build a semantic AI search engine in Python that understands user intent using vector embeddings and similarity search. This guide explains how to store content in a vector database, run semantic queries, and retrieve highly relevant results based on meaning instead of exact keywords, making it ideal for modern AI-powered search applications.

Sentiment Analysis in NLP: From Transformers to LLM-Based Models

Discover how sentiment analysis in NLP works with Python and transformer models. Learn to classify text and extract sentiment with confidence for real-world applications.

Building Stateful AI Workflows with LangGraph in Python

Explore LangGraph in Python to orchestrate multi-step AI workflows using open-source models like Mistral-7B. Build stateful, auditable, and production-ready research agents for literature review, hypothesis generation, and experiment design.

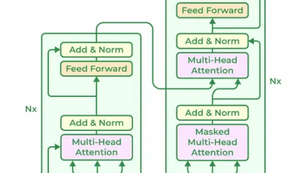

Deep Learning with Transformers in Python

This guide offers a hands-on walkthrough of experimenting with Transformers in Python, covering model preparation, fine-tuning, evaluation, and attention visualization. Designed for researchers and practitioners, it bridges theoretical understanding with practical implementation using modern transformer architectures.

A Complete Guide to LangChain for AI-Powered Application Development

Learn how LangChain helps developers build intelligent, modular, and context-aware AI applications using large language models. Explore its core components, setup process, use cases, and integration with tools like LangSmith, LangGraph, and Google Gemini.

MMLU Benchmark Explained: How AI Models Like ChatGPT Are Measured

The MMLU benchmark has become a critical standard for evaluating artificial intelligence capabilities. It assesses AI systems on their knowledge and reasoning across 57 diverse subjects, ranging from humanities and social sciences to STEM and professional fields like law and medicine. This comprehensive, multiple-choice test challenges models in a "zero-shot" or "few-shot" setting, meaning they must rely on their pre-trained knowledge with little to no examples. The MMLU scor

Creating a Python Virtual Environment (venv): A Step-by-Step Guide

Learn how to use Python’s venv module to create isolated environments for your projects. This guide walks you through setup, activation, installing packages, and best practices for dependency management.

GLUE Benchmark: The General Language Understanding Evaluation Explained

The GLUE benchmark is a widely used evaluation framework for testing the performance of NLP models across a diverse set of language understanding tasks. This blog breaks down what GLUE is, its core tasks, why it matters, and what strengths and limitations you should know—whether you're building transformers or benchmarking models for real-world applications.

SQuAD Data: The Stanford Question Answering Dataset

The GLUE benchmark is a standard evaluation suite for measuring how well NLP models understand and process language. In this post, we break down the tasks included in GLUE, why it’s important for model benchmarking, and what its strengths and limitations mean for modern AI development.

Exploring the Latest Trends in Machine Learning: What's Shaping the Future?

Discover how machine learning is evolving in 2024–2025 with breakthroughs in multimodal AI, real-time inference, low-code platforms, and cutting-edge tools like GPT-4o, Llama 3, and PyTorch 2.x. This guide highlights key trends, frameworks, and research shaping the future of intelligent systems.

Large Language Models (LLMs): What They Are and How They Work

Large Language Models (LLMs) are advanced AI systems trained on vast datasets to understand and generate human-like text. Built on transformer architectures, they process input as tokens, predict the most likely next token, and produce coherent responses. By combining pretraining on massive text corpora with fine-tuning for specific tasks, LLMs power chatbots, coding assistants, and content generation tools across industries.

Intelligent Conversational Systems: Chatbots and Virtual Assistants with LLMs

Large Language Models (LLMs) have revolutionized chatbots and virtual assistants by enabling them to understand context, interpret intent, and respond in natural, human-like language. Through advanced transformer architectures and massive training datasets, LLMs bring intelligence, adaptability, and personality to digital assistants, transforming how users interact with technology in customer support, personal productivity, and everyday communication.

ChatGPT and Machine Learning – Revolutionizing Conversational AI

In the rapidly evolving field of artificial intelligence (AI), ChatGPT has emerged as a groundbreaking model, showcasing the immense...

Exploring spaCy: A Powerful NLP Library in Python

spaCy is one of the most efficient and production-ready NLP libraries in Python. This guide explores how it’s used for tasks like entity recognition, text classification, chatbots, and document analysis — helping developers turn raw text into meaningful insights.

Text Preprocessing in Python using NLTK and spaCy

Text preprocessing is a crucial step in Natural Language Processing (NLP) and machine learning. It involves preparing raw text data for...

bottom of page